The Productivity Paradox, Version 3.0

Weekly Agentic Engineering Digest | Issue #10 | 27 April 2026

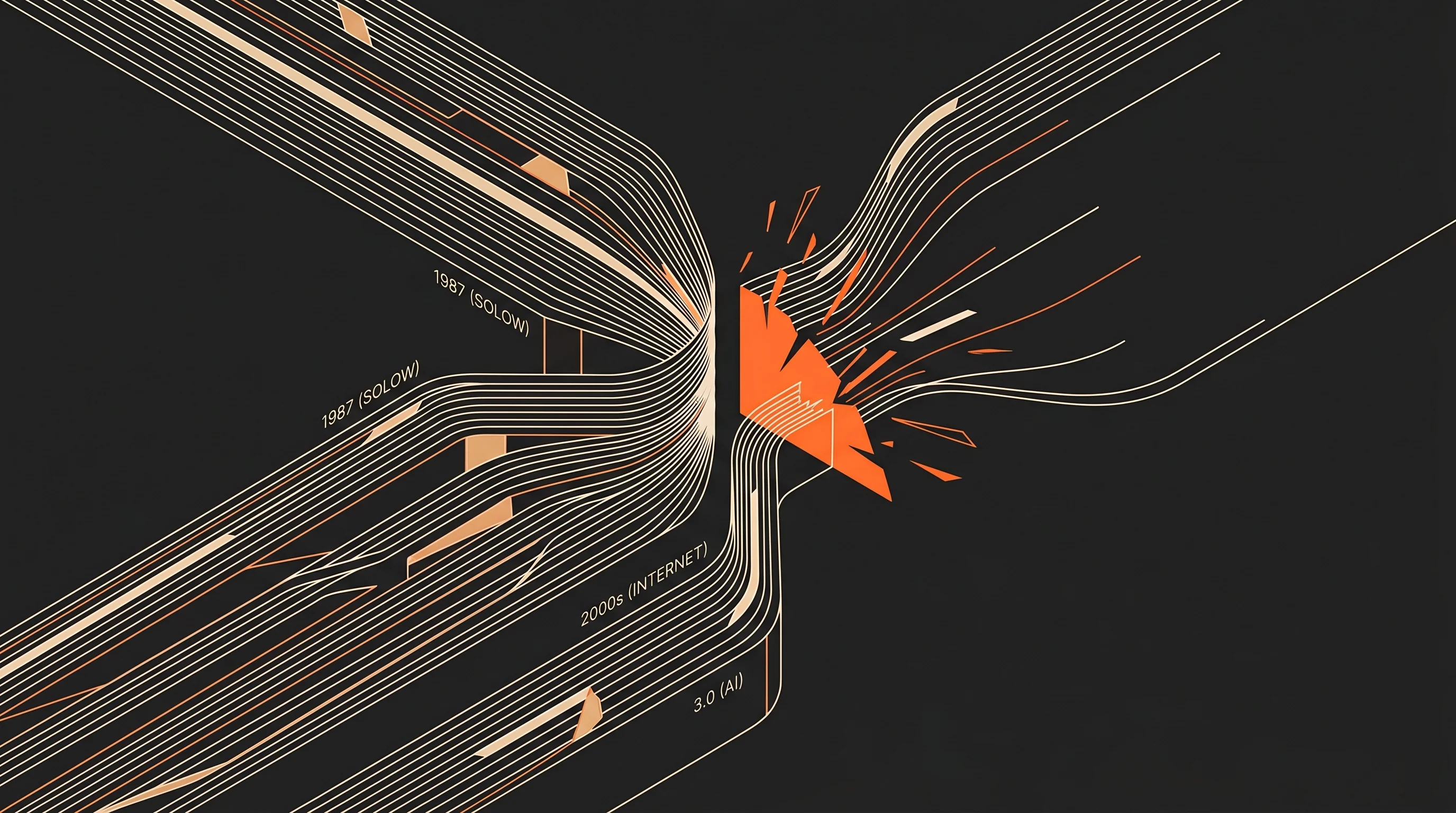

In 1987, Robert Solow wrote the line that launched a thousand economics seminars: you can see the computer age everywhere except in the productivity statistics. Version 1.0 of the paradox. Version 2.0 arrived with the internet and SaaS in the early 2000s, generating the same debate before the gains showed up in the data. We are now inside version 3.0. Billions flow into AI, models, and compute. The hard productivity numbers are moving, but slowly. The sceptical reaction is predictable: is the investment justified?

That is, I would argue, the wrong question.

From Flo's AI Lab

Two events shaped this week's thinking.

Monday: Meetup #4 in Munich at Codecentric AG. Around 80 practitioners, including vibe coders, product managers, and consultants at every stage of the learning curve. The questions have shifted since January. Then, the room wanted definitions. Now it wants deployment strategies. That change, in twelve weeks, is more informative than any market survey.

Thursday: St. Gallen. Together with Oliver Gerstheimer of chilli mind, I ran a four-hour executive seminar for roughly 20 IT heads and CIOs at HSG Universität St. Gallen. Oliver covered communication and AI transformation. I walked through claude code live, using a University of St. Gallen case study to anchor the examples. The delegates were sharp. Their hardest questions were about governance and attribution. Good hard questions.

One observation bridges both rooms: the people closest to the technical work are often least certain about the strategic implications. The people furthest from it are most certain, and frequently wrong in both directions. That gap is where most of the value will be won or lost over the next three years.

Bottlenecks Shift

In the early 1980s, storage was the scarce resource. Code was written in languages that treated hardware efficiency as a first principle, because inefficiency was expensive. Speicher was the bottleneck, to use Kirsch's framing.

Today, a single browser tab can occupy a gigabyte of RAM. Storage costs near nothing per unit. The bottlenecks have moved to time-to-market, developer attention, and iteration speed. Languages like Python or JavaScript would have been indefensible in 1985; they are profligate with hardware but shift the constraint from machine to human.

Someone extrapolating in 1985 from the constraints of that moment could not have predicted the software industry of today. The error would not have been the forecast. It would have been the assumption that the bottlenecks remain fixed.

We are making that assumption now with AI. Electricity consumption and compute costs are discussed as permanent limits. In five to ten years, the picture will look different, through more efficient architectures, specialised models, and on-device inference. Forecasting from today's bottlenecks produces a description of a world that will not arrive.

Invariance-Breaking Third Variables

Werner Kirsch, one of my professors at LMU München, had a concept that has stayed with me. He called them invarianzenbrechende Drittvariablen: invariance-breaking third variables. Factors that make previously incompatible properties suddenly compatible.

The textbook example is Porter. A generation of strategy courses taught that firms must choose between cost leadership and differentiation. Then Amazon arrived: more selection than any bookshop in history, consistently cheaper. The internet, together with what it enabled in logistics and data, was the third variable.

Three examples from this week:

PR, SEO, and design. What cost 10,000 euros at an agency five years ago dropped to 100 euros on freelance platforms two years later. Today, an agentic engineering workflow delivers usable output for under one euro per run. Not the same quality as the top-tier agency; sufficient for many applications that never happened before because the economics were impossible.

Websites. A bespoke agency build: 150,000 euros. A template system: 15,000. AI-assisted development, what practitioners call vibe coding, brings the figure closer to 1,500. Businesses that previously lacked a web presence now have one. The market did not become cheaper at the top. It opened at the bottom.

Financial data terminals. What institutional investors accessed through Bloomberg for decades is now reachable by individuals through platforms and APIs. Day trading has been democratised, with all the advantages and complications that follow.

Clayton Christensen called this pattern non-consumption. The largest markets for disruptive innovations are not the existing customers of premium providers. They are the people who were not participating at all.

Three Implications

Productivity will rise. The Solow Paradox resolved once; it resolved again in version 2.0. There is no structural reason version 3.0 is different. Anyone forecasting from today's bottlenecks is describing a world that will not materialise.

The incumbent replacement story has a second chapter. Instead of one new quasi-monopolist replacing old ones, what typically emerges is a large number of smaller providers serving niches that were previously uneconomical. For Mittelstand companies: existing positions carry more risk, new positions are more reachable. For private equity and family offices: concentration bets on incumbent platforms are riskier than they appear; small specialised operators deserve earlier attention.

The strategic question has changed. Not "where can we become more efficient?" but: which trade-offs that everyone accepts as natural law are actually bottlenecks in the process of disappearing? Claude code and agentic engineering did not change these frameworks. Solow, Christensen, Porter, and Kirsch wrote long before any of this existed. What changes is where you point them.

The pattern repeats. The specifics do not.

Originally published on drfloriansteiner.com